Point-of-Care Ultrasound Bootcamp Training: A Pilot Program for Internal Medicine Residency

Saturday, December 14, 2024 at 8:00AM

Saturday, December 14, 2024 at 8:00AM Mariel Ma, MD1; Firas Abbas, MD1; Daniel Puebla Neira, MD2; Jordan Merz, MD3; Walter Migotto, MD2; Manoj Mathew, MD2

(1) Department of Internal Medicine, University of Arizona College of Medicine – Phoenix, Arizona, USA

(2) Division of Pulmonary Critical Care Medicine, University of Arizona College of Medicine – Phoenix, Arizona, USA

(3) Department of Medicine-Pediatrics, University of Arizona College of Medicine – Phoenix, Arizona, USA

Abstract

Background: The goal of the study was to develop a pilot program to assess point-of-care ultrasound (POCUS) knowledge and proficiency via a bootcamp-style education. The primary endpoints were to objectively identify trainees’ ability and interest to learn POCUS.

Methods: A POCUS education program was designed for 41 post-graduate-year-1 trainees’ orientation in an internal medicine residency program. Trainees were provided brief lectures on lower extremity veins, lung, and abdominal pathologies before proceeding to stations to practice ultrasound skills. An anonymous test was completed by each participant before and after they were provided lectures and practice time. The percent correct for each question before and after the intervention was compared using a paired t-test. The study was determined to be exempt by the University of Arizona IRB review.

Results: Primary outcomes found that 100% of the trainees improved on their knowledge of ultrasound based on a post-didactic assessment, and all questions except for one was statistically significant. The average pretest correct was 46% and posttest correct 84% (p<.001). Feedback on the sessions was assessed using Word Cloud. A higher number of trainees reported interest in applying POCUS to clinical practice after the session. The bootcamp was helpful when using videos, case examples, and small groups. Areas of improvement included providing more practice time, feedback on images obtained, and teaching cardiac ultrasound.

Conclusion: Internal medicine trainees were able to effectively learn the basics of POCUS, and they were more likely to use ultrasound after gaining knowledge.

Abbreviations

ACP: American College of Physicians

ATS: American Thoracic Society

CHEST: American College of Chest Physicians

FAST exam: focused assessment with sonography in trauma

ICU: intensive care unit

IRB: Institutional Review Board

PGY1: post-graduate-year-1

POCUS: point-of-care ultrasound

Introduction

Point-of-care ultrasound (POCUS) has become increasingly popular in medicine due to its ease of access, reduction in need for consultative ultrasonography, and usefulness in diagnosing common conditions (1-4). The use of POCUS in the emergency rooms, intensive care unit (ICU), Surgical, and Medical Wards has been established. The availability of ultrasound machines and handheld ultrasound devices have allowed for rapid assessment of patients by teams responding to cardiopulmonary arrest codes, rapid responses, and in evaluating patients with hemodynamic instability (1,5). Portable ultrasound devices have also facilitated increased use of POCUS, leading to reduced times to diagnosis and changes in management (6). Furthermore, utilizing POCUS has been found to lower complications, improve outcomes, and increase patient safety in procedures such as thoracentesis and central venous catheter placement (5).

Due to the increasing use of POCUS and its benefits in practice, there is gaining interest in developing ultrasound skills for internal medicine residents (7). American College of Physicians (ACP) issued a statement acknowledging the importance of POCUS in internal medicine with the goal of establishing a roadmap for POCUS education and training (8). The Society of Hospital Medicine also recognized the many advantages of POCUS and the growing interest among hospitalists (9). Emergency medicine residency programs have integrated POCUS training as a requirement; however, many internal medicine residency programs in the United States do not have consistent training in ultrasound (10,11). Barriers to establishing a POCUS curriculum for internal medicine trainees include limited equipment, number of trained faculty, and time constraints related to patient care (1,2,12-14). Considering the limitations of time to teach during clinical practice, we developed a one-day educational program to improve internal medicine trainees’ foundational knowledge and skills in ultrasound. The content was tailored to common problems internal medicine physicians would encounter. We evaluated the residents’ ultrasound knowledge before and after the training session and assessed their perceptions regarding the training day and their likeliness to use ultrasound in residency with their newly acquired skills.

Methods

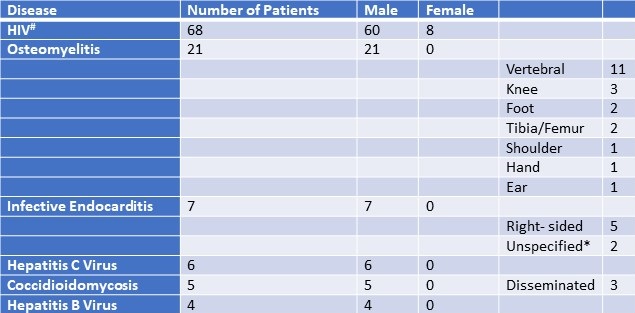

A pilot educational program was implemented during post-graduate-year-1 (PGY1) orientation at the beginning of the academic year 2023-2024. Participants were from the University of Arizona College of Medicine Phoenix Internal Medicine Residency Program. The program was eight hours with approximately four hours spent in lectures and four hours in practice sessions. Lectures and educational material were developed by faculty who are trained in pulmonary and critical care medicine, as well as one faculty member who is the point-of-care ultrasound director of our hospital. The material was based on the authors’ prior experience during fellowship training and participation in national conferences such as the American College of Chest Physicians (CHEST) and the American Thoracic Society (ATS). The lectures consisted of basic ultrasound anatomy used in the daily practice of medicine in the ICU and hospital wards. Lectures were focused on lower extremity venous anatomy and deep vein thrombosis, common respiratory and abdominal pathologies (Table 1). Before the educational interventions, participants completed an anonymous pretest survey assessing baseline ultrasound knowledge, and their plans to incorporate POCUS into clinical practice. The surveys were provided via REDCap and composed of 19 questions. 13 of the questions were utilized to test knowledge and the rest to assess interest in the use of POCUS before and after the program (Supplementary Material). The second half of the program was focused on practice sessions in small groups. Each group consisted of one preceptor, 4-5 trainees, and one voluntary human model recruited from the community. For our program, preceptors were proficient in ultrasound because they were trained in hospital medicine as well as pulmonary and critical care medicine. The sessions provided a conventional ultrasound machine and a handheld portable ultrasound for comparison. The objective of the stations was to practice using ultrasound to identify vessels, lungs, and anatomy related to the focused assessment with sonography in trauma (FAST) exam. Of note, we did not use the Blue ultrasound decision tree per se, but we did instruct the trainees to identify A and B lines as well as pleural sliding on lung ultrasonography. Also, we taught trainees to evaluate for deep venous thrombosis in the lower extremity. At the end of the program, each trainee completed a follow-up anonymous posttest survey and included feedback. The data was deidentified and stored in a Microsoft Excel spreadsheet. Using Excel, the pretest and posttest results were compared using a paired t-test (statistically significant was a p-value <0.05). A Word Cloud from the most mentioned words in the feedback sections of the surveys was generated using Microsoft PowerPoint. The study was determined to be exempt from the University of Arizona Institutional Review Board (IRB) review.

Table 1. Summary of educational content. To view Table 1 in a separate, enlarged window click here.

Results

Forty-one PGY1 trainees from the University of Arizona College of Medicine Phoenix Internal Medicine Residency Program completed the pretest survey, and 40 trainees completed the posttest survey. From the pretest survey, 78% of trainees were somewhat familiar to very familiar with POCUS, and 95% reported that they thought POCUS improves patient care. Almost 88% of trainees had plans to incorporate POCUS into their clinical practice.

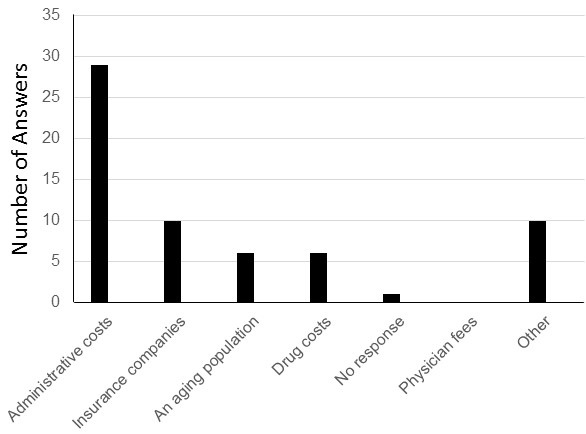

The survey also evaluated basic POCUS knowledge before and after the educational intervention. There was a large improvement in the percent correct for all 13 questions assessing knowledge, and only one question was not statistically significant (Table 2). Specifically, the question regarding ultrasound settings had an increase in the number correct but the result was not statistically significant (pretest correct 56%, posttest correct 76%, p=.103). The average pre-test score correct was 46% and post-test correct was 84% (p<.001). The p-values showed a significant increase in the posttest understanding of the identification of probes, handling of probes, and use of M-mode which are all great foundations for learning POCUS. Overall, trainees were able to effectively acquire foundational knowledge in ultrasound after a one-day training session. In addition, before the educational intervention 88% of trainees had plans to use POCUS during clinical practice, but after the intervention 100% of trainees thought ultrasound was beneficial and reported willingness to use ultrasound in their clinical practice (p=.023).

Table 2. Percent correct per question was compared and a paired t-test was performed for each one. Note that the second-to-last question had one missing answer posttest. To view Table 2 in a separate, enlarged window click here.

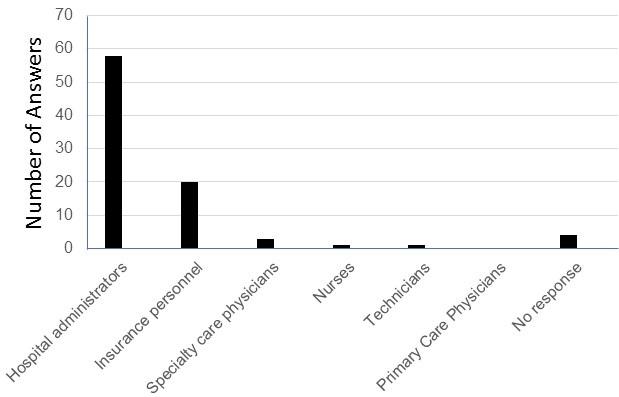

Feedback of the educational program was received from the participants and analyzed in a Microsoft Word Cloud (Figure 1). Majority of trainees felt that videos, anatomy diagrams, case examples, and small groups for the practice sessions were the most helpful in learning POCUS. On the other hand, several trainees expressed wanting a cardiac ultrasound station, even smaller groups, or more time during ultrasound stations to give each participant plenty of time to practice. Overall, the trainees believed the POCUS bootcamp was highly informative and will be beneficial during their rotations.

Feedback of the educational program was received from the participants and analyzed in a Microsoft Word Cloud (Figure 1). Majority of trainees felt that videos, anatomy diagrams, case examples, and small groups for the practice sessions were the most helpful in learning POCUS. On the other hand, several trainees expressed wanting a cardiac ultrasound station, even smaller groups, or more time during ultrasound stations to give each participant plenty of time to practice. Overall, the trainees believed the POCUS bootcamp was highly informative and will be beneficial during their rotations.

Figure 1. Word cloud of the most stated feedback of the sessions was created using Microsoft PowerPoint. Overall, residents felt that the POCUS bootcamp was very helpful when using videos, case examples, and small groups. Areas of improvement included residents wanting more practice time and a cardiac ultrasound session. Figure courtesy of Mariel Ma, MD; Department of Internal Medicine, University of Arizona, Phoenix, AZ. To view Figure 1 in a separate, enlarged window click here.

Figure 1. Word cloud of the most stated feedback of the sessions was created using Microsoft PowerPoint. Overall, residents felt that the POCUS bootcamp was very helpful when using videos, case examples, and small groups. Areas of improvement included residents wanting more practice time and a cardiac ultrasound session. Figure courtesy of Mariel Ma, MD; Department of Internal Medicine, University of Arizona, Phoenix, AZ. To view Figure 1 in a separate, enlarged window click here.

Discussion

We found that a one-day 8-hour POCUS training session was not only feasible but effective in teaching internal medicine trainees ultrasound skills. All the trainees increased their knowledge of POCUS after brief lectures and practice sessions as evidenced by improvement in a post-didactic assessment. The curriculum resulted in statistically significant percent correct on most questions except for one regarding ultrasound settings. Trainees demonstrated growth in pivotal areas for mastering POCUS – identifying ultrasound probes, operating probes, and using M-mode. They were also reported to be more likely to incorporate POCUS into practice. The design of the program was divided between formal didactics and performing ultrasound scans on voluntary human models. This pilot educational program format was feasible and can be utilized by other internal medicine residency programs to deliver formal ultrasound training.

POCUS has become a useful tool for diagnosing problems and performing procedures because a physician can quickly obtain information at the bedside (1-4). Ultrasound training has been integrated into emergency medicine residencies as a required skill for residents to obtain. There has been a growing interest in bringing ultrasound training into internal medicine residencies (7). However, many internal medicine residency programs have not yet established a formal curriculum. We successfully implemented a one-day educational POCUS program, and our trainees showed a significant improvement in their knowledge of ultrasound. Our hospital provides formal ultrasound machines and portable handheld ultrasound devices that trainees can borrow. The goal of the bootcamp session was to give them foundational knowledge in ultrasound so that they would be able to apply them in their clinical practice.

Future directions for this curriculum would be to further develop it into a yearly course, and we have already been able to successfully give another POCUS training session for the next class of first-year trainees. We also plan to have multiple dedicated workshops throughout the year to allow more time for trainees to learn complex skills such as the cardiac ultrasound and the Blue decision tree. Since PGY1 trainees participated in the study, we will be able to monitor their progress as we create more POCUS bootcamp sessions. Another assessment for the same participants should be given to evaluate their knowledge and skill retention. We would also include opportunities to provide feedback on ultrasound imaging. Lastly, we have been piloting a POCUS training pathway for trainees who want to further develop their ultrasound skills and obtain formal POCUS certification.

Strengths of our curriculum include its design as a one-day bootcamp training session and focus on high-yield applications of ultrasound (4,5,15). We were also able to include the entire first-year class of internal medicine trainees, and faculty proficient in ultrasound were directly involved in teaching. Our program incorporated time for practical experience which has been demonstrated to improve trainees’ confidence and skills in previous studies on POCUS education (16-18). Trainees were able to compare using portable handheld ultrasound devices and conventional ultrasound machines. While both the handheld and formal ultrasound machines were easy to use, the formal ultrasound machine offered a higher visualization of anatomical structures. In addition, trainees had to be familiar with the several types of probes when using the formal ultrasound machine, as opposed to a portable one that has built-in settings that can be easily changed using the same device. Trainees were able to learn the differences between probes, and as the practice sessions progressed, they became more familiar with them. Other strengths of the study were the use of pre and posttest surveys to objectively assess trainees’ knowledge, as well as a secondary analysis of their feedback.

Limitations of the study were the small sample size, focus on PGY1 trainees, and inability to assess skills and knowledge long-term. Due to timing and scheduling feasibility, a pilot program was only focused on the PGY1 class, and our findings are not a full representation of the rest of the internal medicine residency program. It is also possible each participant started with varying levels of ultrasound experience which was not considered in the surveys. Our curriculum was conducted in one day, but we do not know if the trainees utilized ultrasound later. Other studies focused on ultrasound training assessed participants’ retention and skills longitudinally (12,17,19). Nevertheless, short-term POCUS curriculums have shown benefit for trainees, supporting the idea that even brief sessions can be effective (16,18,20). To further develop competency in POCUS, future curriculum designs should include time to review images with faculty.

Conclusion

In summary, internal medicine PGY1 trainees were able to successfully complete an 8-hour ultrasound training session, significantly improved their knowledge in POCUS skills, and were more likely to incorporate POCUS into their clinical practice after the program.

Acknowledgements

All authors were involved in the design, execution, writing and analysis of this study. Mariel Ma, MD also created the tables and figures, had full access to the data, and will vouch for the integrity of the data analysis. The authors received no sources of funding for this research and there are no disclosures.

Supplementary Material

To view supplementary material click here. The survey material shows examples of the survey questions given before and after the educational program. Questions for feedback were not included in the supplementary material. Each participant was given a unique identifier (number); therefore, the investigators could not ascertain the identity of individuals from the information. Deidentified data was stored in an Excel Spreadsheet. Pictures of ultrasound images are not included in this sample survey. The correct answers are in bold.

References

- Ramgobin D, Gupta V, Mittal R, Su L, Patel MA, Shaheen N, Gupta S, Jain R. POCUS in Internal Medicine Curriculum: Quest for the Holy-Grail of Modern Medicine. J Community Hosp Intern Med Perspect. 2022 Sep 9;12(5):36-42. [CrossRef] [PubMed]

- LoPresti CM, Schnobrich DJ, Dversdal RK, Schembri F. A road map for point-of-care ultrasound training in internal medicine residency. Ultrasound J. 2019 May 9;11(1):10. [CrossRef] [PubMed]

- Micks T, Braganza D, Peng S, McCarthy P, Sue K, Doran P, Hall J, Holman H, O'Keefe D, Rogers P, Steinmetz P. Canadian national survey of point-of-care ultrasound training in family medicine residency programs. Can Fam Physician. 2018 Oct;64(10):e462-e467. [PubMed]

- Ma IWY, Arishenkoff S, Wiseman J, Desy J, Ailon J, Martin L, Otremba M, Halman S, Willemot P, Blouw M; Canadian Internal Medicine Ultrasound (CIMUS) Group*. Internal Medicine Point-of-Care Ultrasound Curriculum: Consensus Recommendations from the Canadian Internal Medicine Ultrasound (CIMUS) Group. J Gen Intern Med. 2017 Sep;32(9):1052-1057. [CrossRef] [PubMed]

- Watson K, Lam A, Arishenkoff S, Halman S, Gibson NE, Yu J, Myers K, Mintz M, Ma IWY. Point of care ultrasound training for internal medicine: a Canadian multi-centre learner needs assessment study. BMC Med Educ. 2018 Sep 20;18(1):217.[CrossRef] [PubMed]

- Sorensen B, Hunskaar S. Point-of-care ultrasound in primary care: a systematic review of generalist performed point-of-care ultrasound in unselected populations. Ultrasound J. 2019 Nov 19;11(1):31. [CrossRef] [PubMed]

- Olgers TJ, Ter Maaten JC. Point-of-care ultrasound curriculum for internal medicine residents: what do you desire? A national survey. BMC Med Educ. 2020 Jan 31;20(1):30. [CrossRef] [PubMed]

- American College of Physicians. Point of care ultrasound (POCUS) for internal medicine. Available at: https://www.acponline.org/meetings-courses/focused-topics/point-of-care-ultrasound-pocus-for-internal-medicine/acp-statement-in-support-of-point-of-care-ultrasound-in-internal-medicine (accessed May 28, 2024).

- Soni NJ, Schnobrich D, Mathews BK, et al. Point-of-Care Ultrasound for Hospitalists: A Position Statement of the Society of Hospital Medicine. J Hosp Med. 2019 Jan 2;14:E1-E6. [CrossRef] [PubMed]

- Badejoko SO, Nso N, Buhari C, Amr O, Erwin JP 3rd. Point-of-Care Ultrasound Overview and Curriculum Implementation in Internal Medicine Residency Training Programs in the United States. Cureus. 2023 Aug 5;15(8):e42997. [CrossRef] [PubMed]

- Reaume M, Siuba M, Wagner M, Woodwyk A, Melgar TA. Prevalence and Scope of Point-of-Care Ultrasound Education in Internal Medicine, Pediatric, and Medicine-Pediatric Residency Programs in the United States. J Ultrasound Med. 2019 Jun;38(6):1433-1439. [CrossRef] [PubMed]

- Nathanson R, Le MT, Proud KC, et al. Development of a Point-of-Care Ultrasound Track for Internal Medicine Residents. J Gen Intern Med. 2022 Jul;37(9):2308-2313. [CrossRef] [PubMed]

- Schnittke N, Damewood S. Identifying and Overcoming Barriers to Resident Use of Point-of-Care Ultrasound. West J Emerg Med. 2019 Oct 14;20(6):918-925. [CrossRef] [PubMed]

- Schnobrich DJ, Gladding S, Olson AP, Duran-Nelson A. Point-of-Care Ultrasound in Internal Medicine: A National Survey of Educational Leadership. J Grad Med Educ. 2013 Sep;5(3):498-502. doi: 10.4300/JGME-D-12-00215.1. Erratum in: J Grad Med Educ. 2019 Dec;11(6):742. [CrossRef] [PubMed]

- Rosana M, Asmara OD, Pribadi RR, Kalista KF, Harimurti K. Internal Medicine Residents' Perceptions of Point-of-Care Ultrasound in Residency Program: Highlighting the Unmet Needs. Acta Med Indones. 2021 Jul;53(3):299-307. [PubMed]

- Keddis MT, Cullen MW, Reed DA, Halvorsen AJ, McDonald FS, Takahashi PY, Bhagra A. Effectiveness of an ultrasound training module for internal medicine residents. BMC Med Educ. 2011 Sep 28;11:75. [CrossRef] [PubMed]

- Dulohery MM, Stoven S, Kurklinsky AK, Halvorsen A, McDonald FS, Bhagra A. Ultrasound for internal medicine physicians: the future of the physical examination. J Ultrasound Med. 2014 Jun;33(6):1005-11. [CrossRef] [PubMed]

- Haghighat L, Israel H, Jordan E, Bernstein EL, Varghese M, Cherry BM, Van Tonder R, Honiden S, Liu R, Sankey C. Development and Evaluation of Resident-Championed Point-of-Care Ultrasound Curriculum for Internal Medicine Residents. POCUS J. 2021 Nov 23;6(2):103-108. [CrossRef] [PubMed]

- Mellor TE, Junga Z, Ordway S, et al. Not Just Hocus POCUS: Implementation of a Point of Care Ultrasound Curriculum for Internal Medicine Trainees at a Large Residency Program. Mil Med. 2019 Dec 1;184(11-12):901-906. [CrossRef] [PubMed]

- Geis RN, Kavanaugh MJ, Palma J, Speicher M, Kyle A, Croft J. Novel Internal Medicine Residency Ultrasound Curriculum Led by Critical Care and Emergency Medicine Staff. Mil Med. 2023 May 16;188(5-6):e936-e941. [CrossRef] [PubMed]